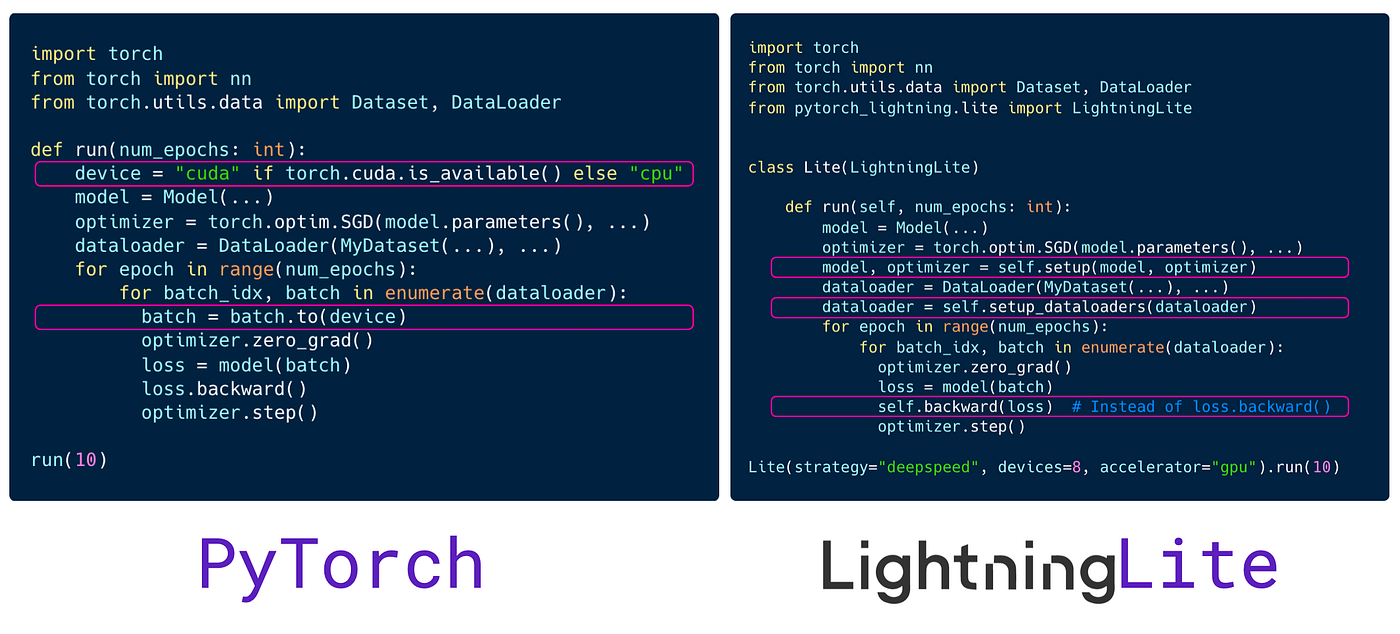

Scale your PyTorch code with LightningLite | by PyTorch Lightning team | PyTorch Lightning Developer Blog

PyTorch-Direct: Introducing Deep Learning Framework with GPU-Centric Data Access for Faster Large GNN Training | NVIDIA On-Demand

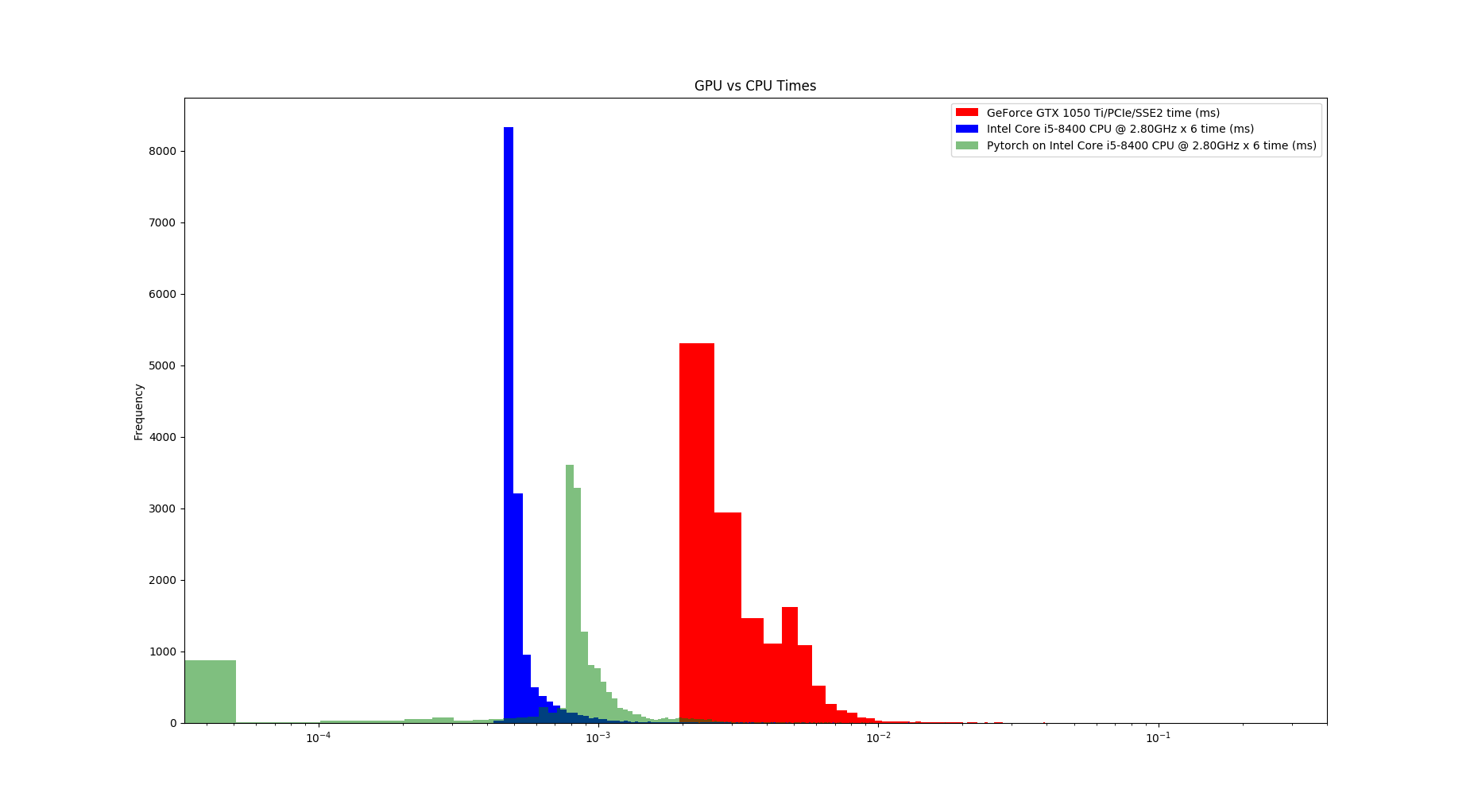

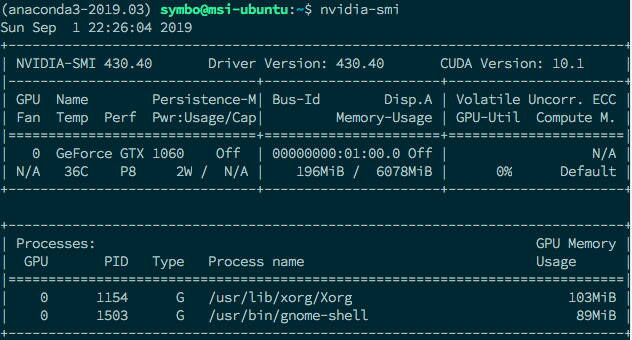

PyTorch: Switching to the GPU. How and Why to train models on the GPU… | by Dario Radečić | Towards Data Science

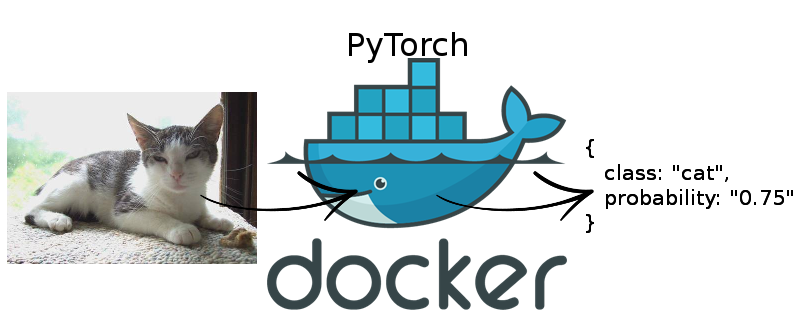

PyTorch GPU inference with Docker and Flask :: Päpper's Machine Learning Blog — This blog features state of the art applications in machine learning with a lot of PyTorch samples and deep

Use GPU in your PyTorch code. Recently I installed my gaming notebook… | by Marvin Wang, Min | AI³ | Theory, Practice, Business | Medium